Why Legacy Service Models Can’t Scale, and What Comes Next

Growth exposes what your service model is made of.

Early on, it hides weaknesses. Small teams compensate through effort. Everyone knows the clients. Knowledge lives in conversations.

As the client base expands, cracks appear. Escalations increase. Response times stretch. Senior engineers become the safety net. Burnout rises quietly. Customers repeat themselves. Leadership spends more time firefighting than building.

Revenue may be growing, but predictability isn't. Without predictability, margins tighten, churn risk increases, and valuation stalls.

The problem isn't talent, effort, or even tooling. It's that most MSPs are trying to scale a service model built for a ticket-driven era—where capacity only increased when headcount did.

That model initially created growth. It doesn't create leverage today. And without leverage, scale eventually turns into strain.

The next stage of service delivery isn't about adding more layers to the same structure.

It's about replacing the structure entirely.

What a Service Delivery Model Really Is

Every MSP operates inside a service delivery model, even if they’ve never named it.

It’s the invisible structure that determines:

- How work enters the system

- How it gets routed

- Who owns it

- How success is measured

It organizes people, process, technology, and metrics into something that’s supposed to deliver consistent outcomes.

And most of those models were built long before AI became a meaningful operational layer.

That’s the tension — we’re trying to scale modern expectations on top of legacy architecture.

The Landscape of Legacy Models

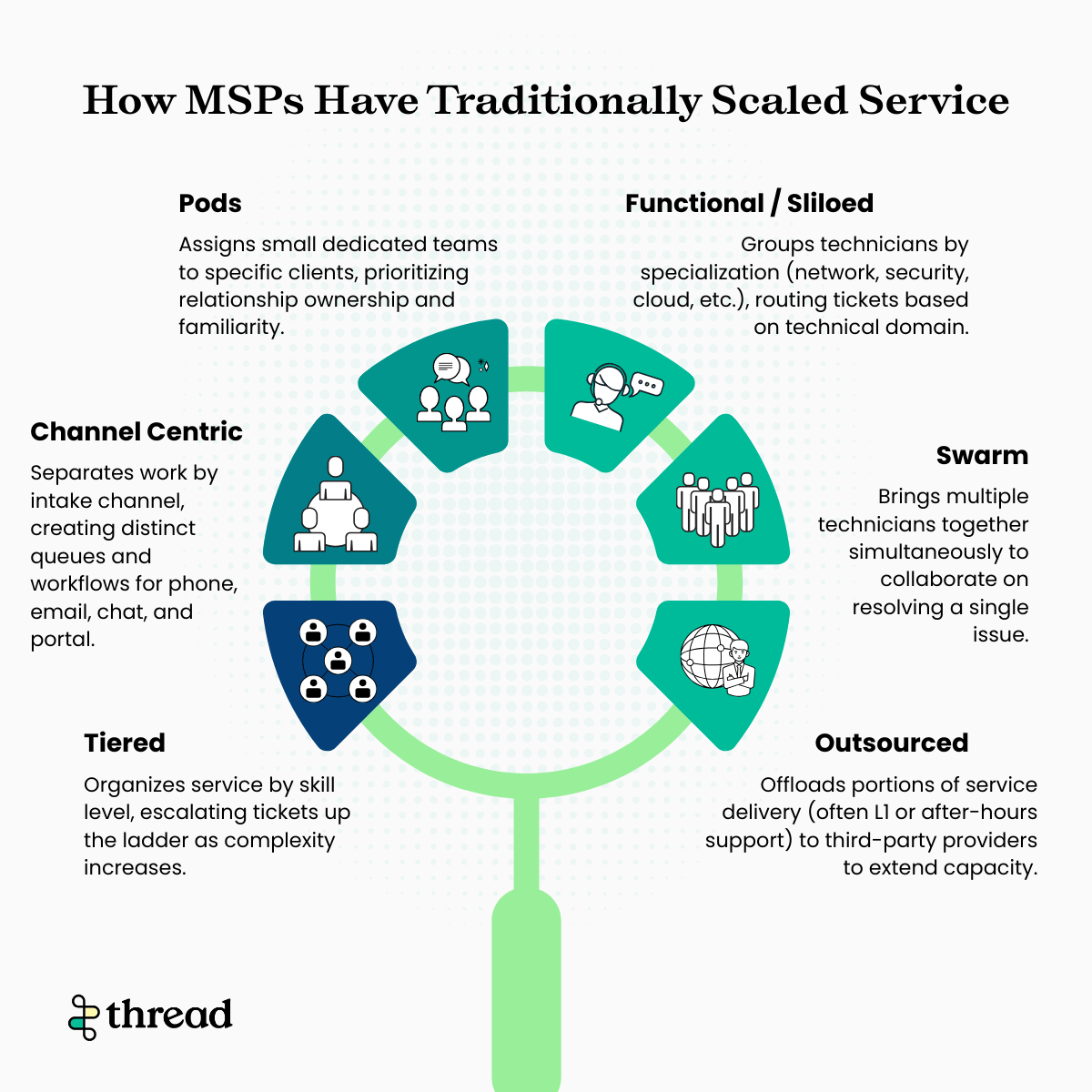

Before we go deeper, it’s worth acknowledging the landscape most MSPs are operating in today. Over time, service desks evolved into a mix of structures:

- Tiered (L1 / L2 / L3)

- Channel-centric

- Pods

- Functional or siloed teams

- Swarming models

- Partially outsourced layers

Each of these emerged to solve a scaling problem at a specific moment in time. But most were designed to manage ticket flow — not compound intelligence. As volume increases, these models tend to add layers, handoffs, and complexity rather than clarity.

Most legacy models fall into one of these six categories:

No matter the structure, the gravitational center is usually the same: tickets, queues, and SLA compliance.

Let’s dig a little deeper into the most common ones.

The Tiered Model: Designed for Control, Not Continuity

The L1 / L2 / L3 structure was built for efficiency and cost control.

Entry-level techs handle the volume, escalations move up the ladder and specialists intervene when needed.

On paper, it’s clean, but in practice, it creates restarts.Each escalation is a handoff, and each handoff is a point of friction. Each friction point increases the chance the customer has to repeat themselves. The model optimizes for resolution time and SLA performance. It does not optimize for continuity of experience.

As ticket volume increases, the model doesn’t become more intelligent, it becomes more layered.

Scaling within this structure means adding more tiers, more routing rules, more oversight.

The Channel Model: Organized Internally, Fragmented Externally

Many MSPs divide service by intake channel:

Phone / Email / Chat / Portal

Each has its own queue, its own workflow and sometimes even its own team.

Internally, this can feel orderly but customers don’t experience service in channels. To them, it’s one conversation.

When they switch channels, continuity often breaks. History and context get lost. Ownership becomes unclear.

The Pod Model: Relationship-Driven, but Human-Limited

Pods attempt to solve fragmentation by assigning small teams to specific clients.

It’s relationship-driven. Ownership-focused. Often beloved by customers.

But it scales by adding headcount.

Consistency depends on individuals. Knowledge lives in people’s heads. When someone leaves, continuity leaves with them.

The model can be strong — but it’s not structurally intelligent. It’s structurally dependent.

The Common Thread: Tickets at the Center

Across these models, one thing remains constant, the ticket is the organizing unit.

- Work is measured per ticket

- Ownership transfers per ticket

- Routing decisions happen per ticket

But customers don’t care about tickets, they experience outcomes.

And that mismatch between how MSPs organize service and how customers experience it and represents is where scaling starts to break.

The Next Step: The Six Pillars of Intelligent Service Delivery

If legacy models organize around tickets, intelligent service delivery organizes around experience and intelligence.

Intelligent service delivery is built on six pillars.

1. Customer-Obsessed Experience

Legacy models optimize for SLA compliance. Intelligent models optimize for customer experience. That means continuity over handoffs. Context over speed metrics. Outcomes over ticket closure. The customer is no longer at the edge of the system. They are at the center of it.

2. Assistive AI

Assistive AI supports technicians in real time.

It summarizes history. Surfaces relevant knowledge. Drafts communication. Reduces repetitive effort. Instead of technicians hunting for context, context comes to them. Throughput increases without adding layers.

3. AI Routing

Routing is no longer manual, static, or queue-based. AI interprets intent, priority, and context before work ever reaches a technician. The right work lands in the right place the first time.

4. Agentic AI

Agentic AI goes beyond assistance. It can triage, gather missing information and execute defined resolutions. Not every task needs to be escalated. Not every request needs to consume human time. This is where intelligence begins to scale.

5. Thread Inbox (Service OS)

If legacy models revolve around the ticket, intelligent models revolve around the Thread.

The inbox becomes the operating system of service. One continuous conversation across time, channels and technicians.

6. Thread Intelligence

In legacy service models, data is stored. In intelligent service models, data compounds.

Thread Intelligence is the learning layer of the system. It captures what happened, why it happened, and how it was resolved, and makes that information usable in real time.

Patterns inform routing. Resolutions strengthen knowledge. Repeated issues surface automatically. Automation expands based on evidence, not guesswork.

The system improves because it has memory. It’s the difference between processing tickets and building intelligence.

The Model Has to Evolve

Every service delivery model eventually reveals what it was designed to optimize.

- Tiered models optimize control

- Channel models optimize intake

- Pods optimize relationships

But none of them were designed to optimize intelligence and that’s why scaling them feels like such a lift. The only way to grow is to add layers, add rules, add people.

The next phase of service delivery isn’t about squeezing more efficiency out of legacy structures. It’s about replacing the center of gravity.

In the next post, we’ll go beyond the framework and into execution — how the six pillars work together in practice, what actually changes day to day, and what it looks like when service becomes an intelligent system instead of a ticket machine.

Because scaling doesn’t require more complexity.

It requires a different foundation.