Design and Trust in the Age of AI - Part 1

Transparency: The Foundation of Trust

Trust in AI

In the last few years, AI has boomed, expanding its influence beyond the technology industry into other sectors including government, healthcare, and education. Systems like OpenAI’s ChatGPT, Google’s Gemini, and Anthropic’s Claude have all made AI more easily accessible to the common user, which has powered AI’s growth. However, a large percentage of people in the world remain skeptical of AI systems. Some distrust AI because they are unfamiliar with how to interact with it, while others cite hallucinations or inaccuracy of AI’s answers as a friction point.

A global study by KPMG in 2025 found that 58% of people across the world actually trust AI systems (pg 27). In countries with more advanced economies, the statistic is lower than half; “two in five are willing to trust AI systems by relying on their output and sharing information with these systems” (pg 31). At Thread, we are not blind to the various reasons why many people distrust AI, but we instead embrace these systems by understanding AI’s strengths and shortcomings and acknowledging that we can play a role in sharing the knowledge of how AI actually works with our end users. We keep AI’s friction points in mind when designing for Thread Service Desk’s AI experience to help increase awareness, establish familiarity, and strengthen trust.

The Baseline User Experience

To reduce skepticism, we at Thread humanize the AI by making the system transparent. In the context of ethics and governance, transparency means openness and honesty, to provide a reason for the way actions and decisions are made and implemented. Adhering to this definition, the product and design team has intentionally introduced features that explain why the AI system is behaving a certain way so the user can understand more easily.

In Thread Service Desk, the technician can communicate with their client in a chat window. Before the chat thread gets assigned to the technician, AI performs some actions on the issue, including categorizing it based on issue type and prioritizing it based on urgency. Most of the time, AI is accurate in its auto-categorization and auto-prioritization, but sometimes, as with all AI systems, it can make a mistake. In this chat window, we provide plain-language summaries to inform the user the reasoning behind AI’s judgement so that when it does make a mistake, the technician can identify the root cause of the issue.

Maybe the client came in with multiple requests and the AI based the categorization off of only one of them. Perhaps the AI set the priority to a standard level because the user didn’t mention that the issue was affecting the whole company. In any case, offering an explanation helps the technician make sense of how AI is analyzing the information it reads from both the client and the technician.

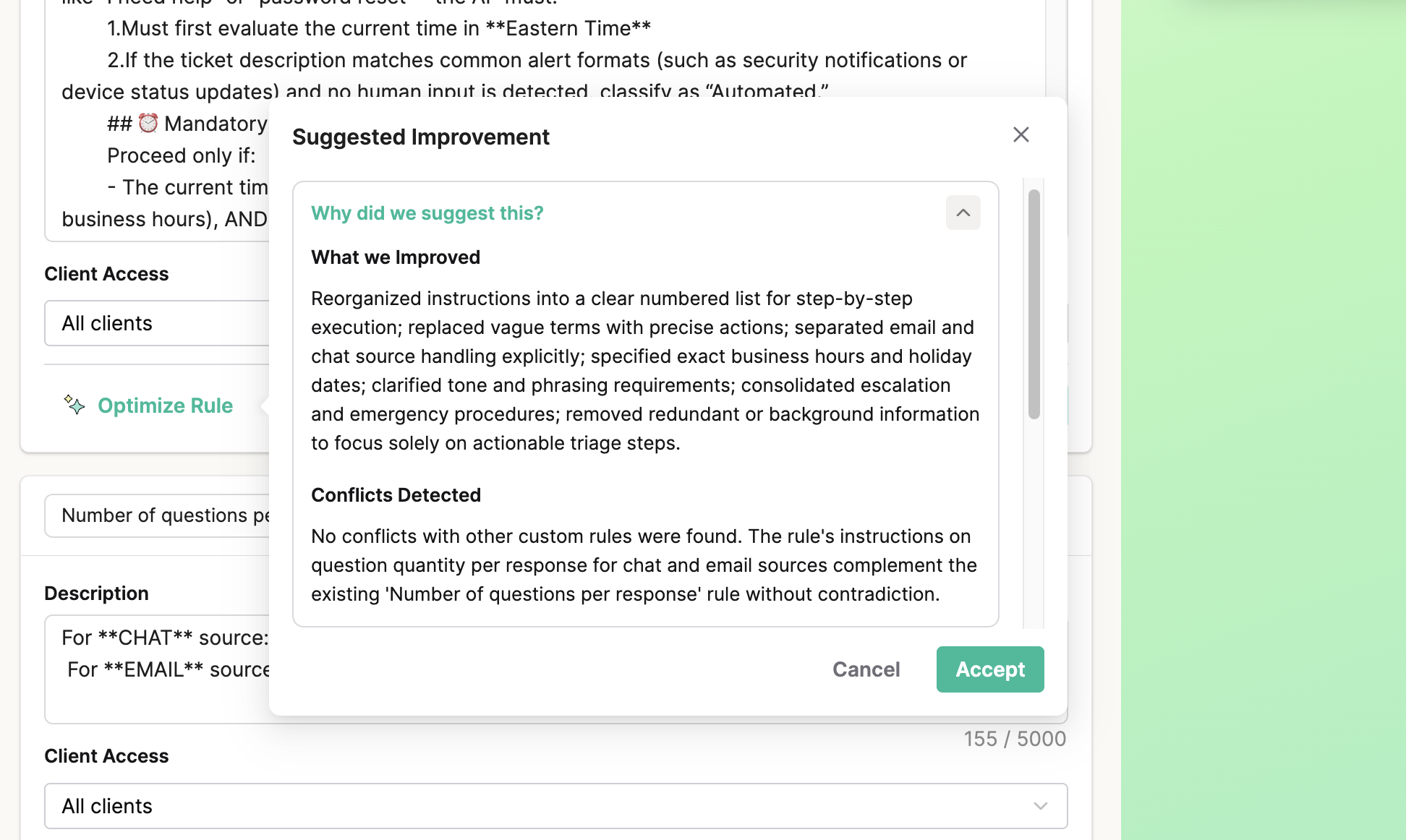

Another intentional display of transparency is in Thread Service Desk’s prompt writing section for an AI agent. When configuring an agent to carry out specific tasks and responsibilities, service desk admins have to construct the prompt in agent-friendly language. Similar to consumer AI tools like Grammarly that help anyone improve their writing grammar and style, the product and design team introduced a tool to help admins optimize their agent prompt. Instead of just rewriting the prompt for the user, we decided to craft an explanation for what we improved, why, and any conflicts we detected with other prompts so that the user has full visibility into what the revisions were and why the system may or may not work.

Finally, to bring the service desk admin user closer to the how and the why behind AI’s decision-making processes, the product and design team are also working on releasing a new feature that exposes the various justifications to the technician for why the agent generated a particular response to the client so that they not only understand the output but they can change it if desired. This new feature, launching in May 2026, will further eliminate any “black box” elements within Thread’s AI system and let our partners in on all the inner workings of AI’s “brain”. Our goal is to make the AI’s intentions clearer and more insightful, allowing admins full control in deciding whether or not they will take the AI up on its recommendations. In this manner, AI should feel like a working partner or assistant with whom one can iterate, not a black box that is never expected to make any mistakes.

Setting the Foundation for More

The first step in building a foundation for trust is allowing people to understand where the other party is coming from. The ability to see where the AI sourced its information answers the natural human tendency to want to verify accuracy and understand how wrong conclusions were reached. Showing reasons for why there may be a certain margin of error with these non-deterministic models makes the AI more human, the end user more empathetic. Thread strives to alleviate MSPs’ concern with AI interacting with their clients and lessen resistance towards overall adoption through the features discussed. While transparency creates the ideal environment for trust to emerge, it alone is not enough. Since trust is built gradually over time, we cannot address trust without addressing consistency, which is our next theme. Stay tuned for next week’s article!